Identity fraud attacks using AI are fooling biometric security systems

- Deepfake selfies can now bypass traditional verification systems

- Fraudsters are exploiting AI for synthetic identity creation

- Organizations must adopt advanced behavior-based detection methods

The latest Global Identity Fraud Report by AU10TIX reveals a new wave in identity fraud, largely driven by the industrialization of AI-based attacks.

With millions of transactions analyzed from July through September 2024, the report reveals how digital platforms across sectors, particularly social media, payments, and crypto, are facing unprecedented challenges.

Fraud tactics have evolved from simple document forgeries to sophisticated synthetic identities, deepfake images, and automated bots that can bypass conventional verification systems.

Social media platforms experienced a dramatic escalation in automated bot attacks in the lead-up to the 2024 US presidential election. The report reveals that social media attacks accounted for 28% of all fraud attempts in Q3 2024, a notable jump from only 3% in Q1.

These attacks focus on disinformation and the manipulation of public opinion on a large scale. AU10TIX says these bot-driven disinformation campaigns employ advanced Generative AI (GenAI) elements to avoid detection, an innovation that has enabled attackers to scale their operations while evading traditional verification systems.

The GenAI-powered attacks began escalating in March 2024 and peaked in September and are believed to influence public perception by spreading false narratives and inflammatory content.

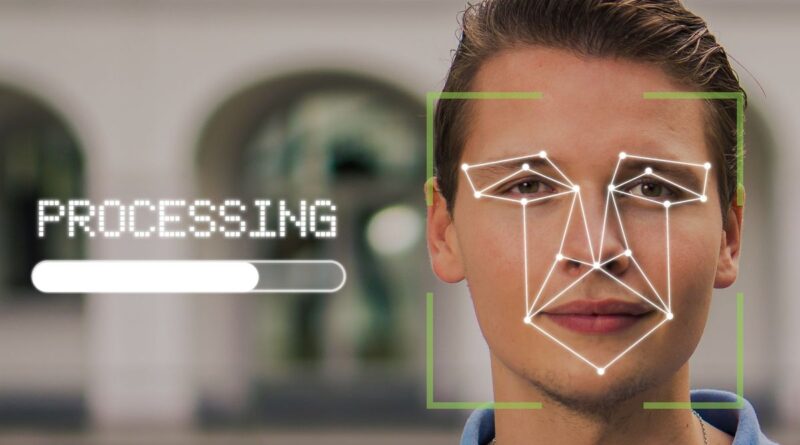

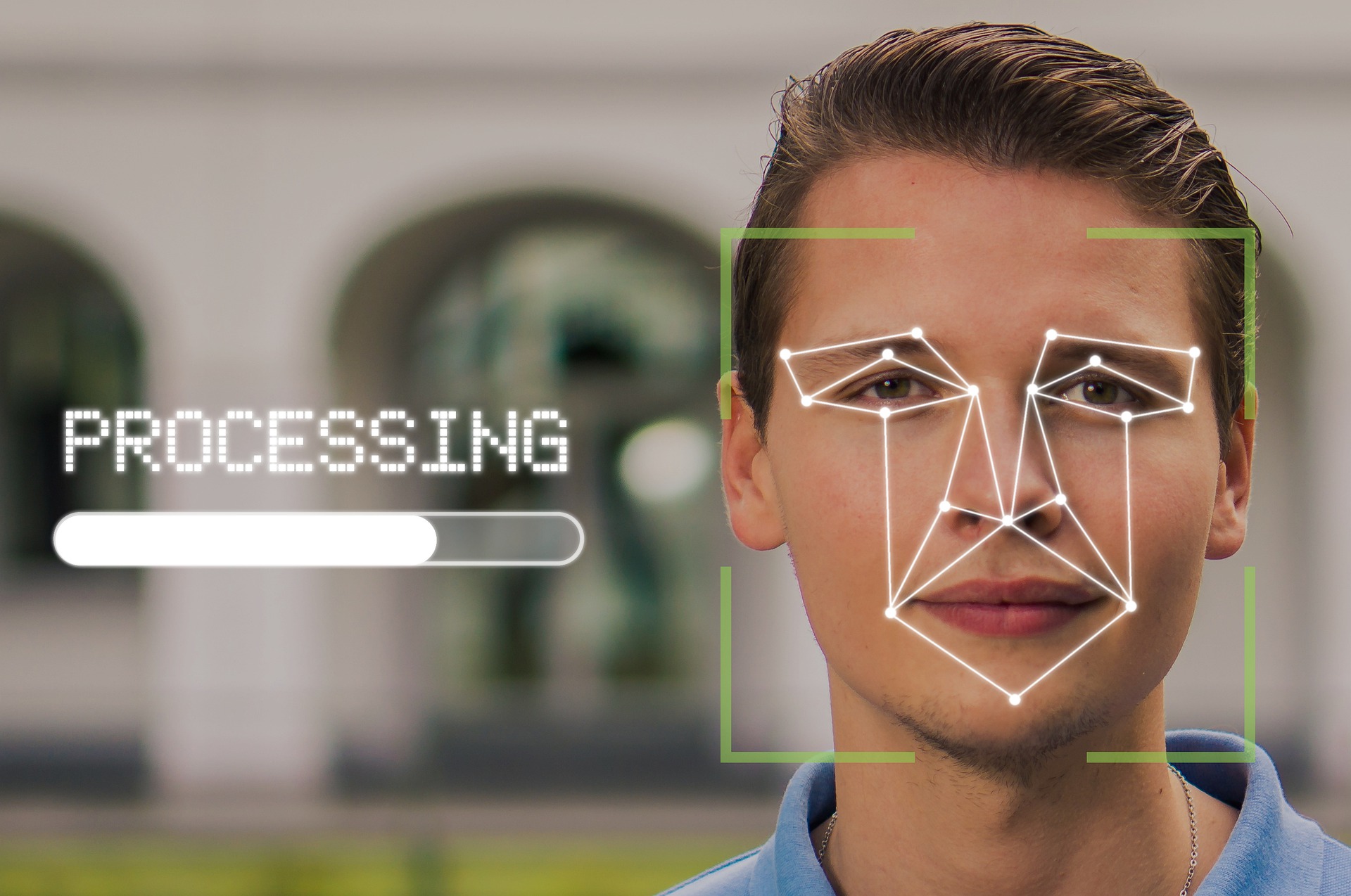

One of the most striking discoveries in the report involves the emergence of 100% deepfake synthetic selfies – hyper-realistic images created to mimic authentic facial features with the intention of bypassing verification systems.

Traditionally, selfies were considered a reliable method for biometric authentication, as the technology needed to convincingly fake a facial image was beyond the reach of most fraudsters.

AU10TIX highlights these synthetic selfies pose a unique challenge to traditional KYC (Know Your Customer) procedures. The shift suggests that moving forward, organizations relying solely on facial matching technology may need to re-evaluate and bolster their detection methods.

Furthermore, fraudsters are increasingly using AI to generate variations of synthetic identities with the help of “image template” attacks. These involve manipulating a single ID template to create multiple unique identities, complete with randomized photo elements, document numbers, and other personal identifiers, allowing attackers to quickly create fraudulent accounts across platforms by leveraging AI to scale synthetic identity creation.

In the payment sector, the fraud rate saw a decline in Q3, from 52% in Q2 to 39%. AU10TIX credits this progress to increased regulatory oversight and law enforcement interventions. However, despite the reduction in direct attacks, the payments industry remains the most frequently targeted sector with many fraudsters, deterred by heightened security, redirecting their efforts toward the crypto market, which accounted for 31% of all attacks in Q3.

AU10TIX recommends that organizations move beyond traditional document-based verification methods. One critical recommendation is adopting behaviour-based detection systems that go deeper than standard identity checks. By analyzing patterns in user behaviour such as login routines, traffic sources, and other unique behavioural cues, companies can identify anomalies that indicate potentially fraudulent activity.

“Fraudsters are evolving faster than ever, leveraging AI to scale and execute their attacks, especially in the social media and payments sectors,” said Dan Yerushalmi, CEO of AU10TIX.

“While companies are using AI to bolster security, criminals are weaponizing the same technology to create synthetic selfies and fake documents, making detection almost impossible.”